- Autonomous mobile robots

- Technical challenges

- Are your robots still hitting stuff?

Are your mobile robots still hitting stuff?

Environmental awareness directly impacts your mobile robot’s ability to navigate obstacles and complete missions. Collisions and unplanned stops drastically reduce throughput and, by extension, its value.

Safety LiDAR systems were once the norm for robot vision. But, safety is no longer a pressing issue for developers – we’ve solved that problem statement. The next frontier is improving efficiency by reducing those unplanned stops and collisions.

3D camera systems and the appropriate vision technology increases your robot’s speed and throughput. But, developers still experience a great deal of friction when trying to adopt this technology.

That’s where ifm can help.

In this article, we’ll talk about 3D camera systems, the challenges they pose for autonomous robotics engineers, and ifm’s holistic solution platform that makes it easier for you to protect your robot.

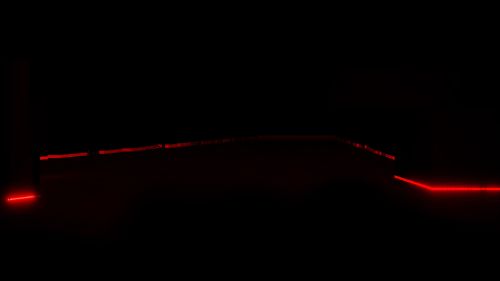

Would your robot hit this?

The two images above show different viewpoints adopted by mobile robots. The image on the left shows the viewpoint unitizing safety LiDAR,. The right utilizes 3D camera technologies.

The problem? Obstacles exist above and below the safety LiDAR.

A mobile robot employing just 2D LiDAR technology can only detect objects solely within its direct line of sight (the red lines). The mobile robot with 3D camera technology reveals previously-obscured objects.

A comprehensive 360-degree field of view with sensors in multiple orientations is becoming standard for preventing collisions. It can give your robots the edge they need over your competitors.

So why has it been so difficult to implement 3D vision solutions?

The friction behind adopting perception technology

The three main reasons developers experience friction when adopting 3D technology are:

We designed ifm’s new O3R camera and perception stack to address these specific concerns:

Problem: Integrating multiple modalities into your perception stack

Robots make unexpected stops when they mistake something like a dust particle for an obstacle. The solution is integrating more cameras for a more comprehensive perspective.

But, building a multi-modal perception stack poses new challenges. These include mitigated sensor synchronization and fusion among other resource-heavy tasks.

Solution: A holistic perception solution

The O3R perception platform allows integration of up to six cameras. You choose the right hardware and configuration. Our platform streamlines their synchronization, communication, and processing.

Problem: You must become a 3D camera expert to integrate them effectively

Developing an effective obstacle detection system means addressing two very challenging problem statements. False positives, which we already discussed, and floor segmentation.

Robots have trouble distinguishing the floor from other objects. They also misjudge distance due to light reflecting from the floor. Solving these involves becoming an expert in the physics behind these problem statements. If that’s not your expertise, you’ll use a lot of time and resources you could put toward other aspects of your build.

Solution: Hire a 3D camera expert instead

ifm already has that expertise in-house as a world leader in sensor manufacturing. We adapt and improve our technologies from other industries to quickly bring innovative solutions to robotics.

Problem: A good off-the-shelf solution doesn’t exist

3D cameras are less expensive and more available than ever. But, cameras designed for general or almost-universal use won’t solve your specific physics problems.

Solution: A 3D camera designed exclusively for AMR applications

Our perception platform uses hardware and software designed specifically for mobile robots. It consolidates perception, computation, and solutions into a unified platform, making integration and maintenance virtually effortless. This way, you can focus on your core development goals.

Why obstacle detection is hard >>

Gain a competitive edge

Ready to learn more about how ifm’s O3R perception platform makes 3D camera systems integration fast and easy? Fill out the form or call Tim McCarver directly at tim.mccarver@ifm.com.