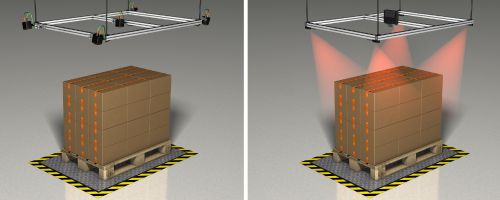

- 3D object recognition

- O3R Robotics Platform

O3R Perception Platform

The perception platform at a glance

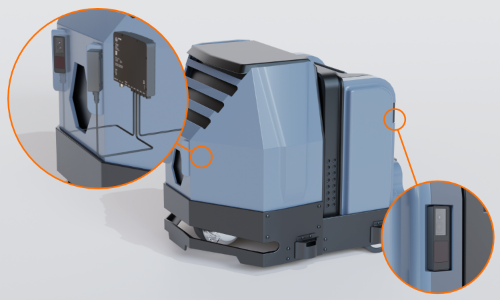

The O3R perception platform is a multi sensor and camera gateway in the size and cost structure of consumer products - with the long-term availability and robustness of industrial products. Up to six camera heads can be connected to the central processing unit via FPD link and additional sensors such as radar or lidar can be connected via Gigabit Ethernet interface. Due to the flexible installation position and arrangement, variably different areas can be scanned and, for example, collisions with obstacles that protrude into the travel path above the field of view of the safety scanner can be prevented.

The powerful central edge compute unit offers enough space to run your own algorithms or to use ready-made functions such as collision avoidance or pallet detection.

Topics

Highest degree of automation in the automotive industry

The Autonomous Vehicle (AV) industry is chasing Level 5 autonomy, full automation where no driver interaction is required, that would allow a general consumer to purchase an AV.

- Manufacturers recognize Level 5 is only attainable with a perception stack that allows the vehicle to better perceive its environment.

- Multi-modal approach for perception around the vehicle.

- Each modality is designed to overcome the "weakness" of another modality, creating a robust platform for the best in class environmental awareness.

Challenges in mobile robotics

Autonomy is not new for Mobile Robotics (first introduced in the 1950’s).

- Unlike the AV industry, cost points are a barrier to adoption for mobile robots.

- Entry points for mobile robots require a large investment from the user, increasing the time to achieve a strong ROI.

- Manufacturer are forced to compromise on hardware selection, focusing primarily on safety, in order to reduce BoM cost.

- Ultimately, this limits the overall flexibility of the robot which, in turn, reduces its capability.

What if you didn’t have to compromise?

Taking a page from the AV "book"

The AV industry is not wrong in their approach to reaching Level 5 Autonomy. Better environmental awareness leads to more flexibility and better overall operation of an AV. The same capability should exist for the Mobile Robot industry.

For this to become a reality, challenges in multi-modal, multi-camera applications, including sensor synchronization and fusion, must be mitigated. The only way to reduce Total Cost of Ownership for perception platforms is to simplify the design and integration of multi-modal systems.

The O3R perception platform was designed to fulfill this task.

The O3R platform is the comprehensive solution for centralised, synchronised processing of image and sensor information in autonomous mobile robots, such as AGVs. The simplified integration and reliable interaction of cameras and sensors enables the robust implementation of relevant functions such as collision avoidance, navigation and positioning. In addition, analysis and dimensioning of stationary objects can be implemented, and is handled more effectively by means of several cameras. Examples include the measurement of pallets, logs, packages or suitcases.

Camera head with imager developed in-house

ifm offers suitable, high-performance camera heads as part of the platform solution: The 2D/3D cameras have optional an angle of aperture of 60° or 105° and are equipped with the latest time-off-light imager from pmdtechnologies ag. This company of the ifm group of companies develops all sensors for the vision products of the automation specialist and adapts them precisely to the respective requirements. Thanks to the modulated infrared light, the 2D/3D camera detects objects with maximum reliability even with increased exposure to ambient light.

Powerful and open: the central unit for sensory processing

The core of the system is a powerful computing unit called Video Processing Unit (VPU). It is based on Yocto Linux and NVIDIA Jetson TX2 and supports open development environments such as ROS and Docker. Up to six camera heads can be connected to the computing unit. Additional sensors, such as ultrasonic sensors for detection of glass surfaces such as doors or partition walls, can be connected via a Gigabit Ethernet interface. All relevant “senses” that an AGV needs for safe autonomous navigation are thus available at a central point.

The O3R software architecture facilitates both pre-development and series development through a rich selection of software tools and support from numerous interfaces. By using a Docker architecture, open development environments such as Python, ROS, CUDA and C ++ are supported.

|

Linux is the most commonly used OS in robotics. Auxilliary devices must speak the same language. |

|

Containers allow the developer full flexibility in programming language and environment. Development time is reduced when using familiar software environment. |

|

ROS is a common middleware used in development. ROS2 provides the potential to move from development to deployment. |

|

Powerful tools such as CUDA and Jetpack are fully deployable on the NVIDIA-based VPU. |